System has applications in social interactions, prosthetics, telemedicine and entertainment

Imagine holding hands with a loved one on the other side of the world. Or feeling a pat on the back from a teammate in the online game “Fortnite.”

In a new study published in the journal Nature, Northwestern University scientists describe the development of a new thin, wireless system that adds a sense of touch to any virtual reality (VR) experience. Not only does this platform potentially add new dimensions to our long-distance relationships and entertainment, the technology also provides prosthetics with sensory feedback and imparts telemedicine with a human touch.

Referred to as an “epidermal VR” system, the device communicates touch through a fast, programmable array of miniature vibrating actuators embedded into a thin, soft and flexible material. The 15-centimeter-by-15-centimeter sheet-like prototypes comfortably laminate onto the curved surfaces of the skin without bulky batteries and cumbersome wires.

“People have contemplated this overall concept in the past, but without a clear basis for a realistic technology with the right set of characteristics or the proper form of scalability. Past designs involve manual assemblies of actuators, wires, batteries and combined internal and external control hardware,” said John Rogers, PhD, a bioelectronics pioneer and the Louis Simpson and Kimberly Querrey Professor of Materials Science and Engineering, Biomedical Engineering and Neurological Surgery. “We leveraged our knowledge in stretchable electronics and wireless power transfer to put together a superior collection of components, including miniaturized actuators, in an advanced architecture designed as a skin-interfaced wearable device — with almost no encumbrances on the user. We feel that it’s a good starting point that will scale naturally to full-body systems and hundreds or thousands of discrete, programmable actuators.”

“We are expanding the boundaries and capabilities of virtual and augmented reality,” said Yonggang Huang, PhD, the Walter P. Murphy Professor of Civil and Environmental Engineering and Mechanical Engineering in the McCormick School of Engineering, who co-led the study with Rogers. “By comparison to the eyes and the ears, the skin is a relatively under-explored sensory interface that could significantly enhance experiences.”

How it works

[pullquote]”You could imagine that sensing virtual touch while on a video call with your family may become ubiquitous in the foreseeable future.”[/pullquote]Rogers and Huang’s most sophisticated device incorporates a distributed array of 32 individually programmable, millimeter-scale actuators, each of which generates a discrete sense of touch at a corresponding location on the skin. Each actuator resonates most strongly at 200 cycles per second, where the skin exhibits maximum sensitivity.

“We can adjust the frequency and amplitude of each actuator quickly and on-the-fly through our graphical user interface,” Rogers said. “We tailored the designs to maximize the sensory perception of the vibratory force delivered to the skin.”

The patch wirelessly connects to a touchscreen interface, typically on a smartphone or tablet. When a user touches the touchscreen, that pattern of touch transmits to the patch. If the user draws an “X” pattern on the touchscreen, for example, the devices produce a sensory pattern, simultaneously and in real-time, in the shape of an “X” through the vibratory interface to the skin.

When video chatting from different locations, friends and family members can reach out and virtually touch each other — with negligible time delay and with pressures and patterns that can be controlled through the touchscreen interface.

“You could imagine that sensing virtual touch while on a video call with your family may become ubiquitous in the foreseeable future,” Huang said.

The actuators are embedded into an intrinsically soft and slightly tacky silicone polymer that adheres to the skin without tape or straps. Wireless and battery-free, the device communicates through near-field communication (NFC) protocols, the same technology used in smart phones for electronic payments.

“With this wireless power delivery scheme, we completely avoid the need for batteries, with their weight, size, bulk and limited operating lifetimes,” Rogers said. “The result is a thin, lightweight system that can be worn and used without constraint, indefinitely.”

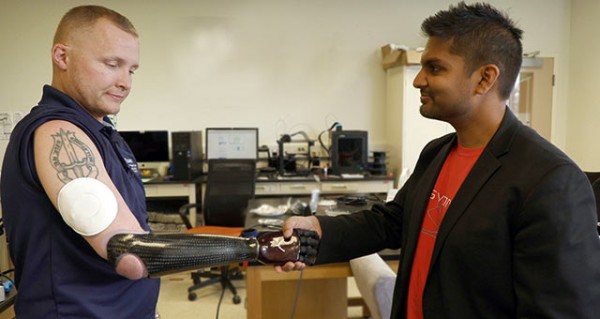

Veteran describes importance of sensory feedback

Everyone can imagine how this type of technology could be combined with a VR headset to create more interactive and immersive gaming or entertainment experiences. But for U.S. Army veteran Garrett Anderson, epidermal VR might provide a much-needed solution to a real-life problem.

At 4 a.m. on Oct. 15, 2005, Anderson was ambushed during his deployment in the Iraq War and lost his right arm just below the elbow.

“A bomb exploded under my truck,” Anderson said. “It blew the entire engine out of the vehicle. Then shrapnel came through the vehicle and severed my arm, which was hanging on by tendons.”

Anderson recently tried Northwestern’s system, integrated with his prosthetic arm. When wearing the patch on his upper arm, Anderson could feel sensations from his prosthetic fingertips transmitted to his arm. The vibrations felt more or less intense, depending on the firmness of his grip.

“Say that I’m grabbing an egg or something fragile,” said Anderson, who is now the outreach coordinator at the University of Illinois’ Chez Veterans Center. “If I can’t adjust my grip, then I might crush the egg. I need to know the amount of grip that I’m applying, so that I don’t hurt something or someone.”

‘I have never felt them with my right arm’

As people who have had amputations use the device, the experience could become more seamless.

“Users develop an ability to sense touch at the fingertips of their prosthetics through the sensory inputs on the upper arm,” Rogers explained. “Overtime, your brain can convert the sensation on your arm to a surrogate sense of feeling in your fingertips. It adds a sensory channel to reproduce the sense of touch.”

Anderson believes this device could potentially “trick” his brain in a way that relieves phantom pain. He also imagines that it could allow him to interact with his children in a new way.

“I lost my arm 15 years ago,” he said. “My kids are 13 and 10, so I have never felt them with my right arm. I don’t know what it’s like when they grab my right hand.”

‘A starting point’

Rogers views the current device as a starting point. “This is our first attempt at a system of this type,” he said. “It could be very powerful for social interactions, clinical medicine and applications that we cannot conceive of today, beyond the obvious opportunities in gaming and entertainment.”

He and Huang are already working to make the current device slimmer and lighter. They also plan to exploit different types of actuators, including those that can produce heating and stretching sensations. With thermal inputs, for example, a person might be able to sense how hot a cup of coffee is through prosthetic fingertips.

The Northwestern team believes the overall engineering framework can accommodate hundreds of actuators with dimensions significantly smaller than those used currently, which have diameters of 18 millimeters and thicknesses of 2.5 millimeters.

Eventually, the devices could be thin and flexible enough to be woven into clothes. People with prosthetics could wear VR shirts that communicate touch through their fingertips. And along with VR headsets, gamers could wear full VR suits to become fully immersed into fantastical landscapes.

“Virtual reality is a very important emerging area of technology,” Rogers said. “Currently, we’re just using our eyes and our ears as the basis for those experiences. The community has been comparatively slow to exploit the body’s largest organ: the skin. Our sense of touch provides the most profound, deepest, emotional connection between people.”

The study, “Skin-integrated wireless haptic interfaces for virtual and augmented reality,” was mostly funded through the Center for Bio-integrated Electronics, as part of the Querrey Simpson Institute for Bioelectronics, which was made possible by Northwestern University trustees Louis A. Simpson ’58 and Kimberly K. Querrey.