An advanced machine learning model predicted spoken language outcomes for children who received cochlear implants more accurately than traditional machine learning approaches, according to a Northwestern Medicine-led international multi-center study published in JAMA Otolaryngology – Head and Neck Surgery.

The findings support the feasibility of a single AI-based prediction model for worldwide use to identify children at risk for less language improvement after cochlear implantation, according to Nancy Young, MD, 87 GME, professor of Otolaryngology in the Division of Pediatric Otolaryngology, who was senior author of the study.

Cochlear implants are an effective treatment for children with sensorineural hearing loss, which most commonly occurs from genetics causes in infants and young children. Other causes include congenital infection, ototoxic medications and trauma to the inner ear.

Cochlear implants have been shown to sustainably improve spoken language in these patients, however when the hearing loss is severe to profound, outcomes can vary. Furthermore, there are no established approaches for predicting which patients will show improvements in language skills and which patients will require additional interventions.

“Before the cochlear implant, very few children with major hearing loss in both ears developed spoken language equivalent to children with typical hearing. Cochlear implantation, the first effective medical treatment to restore a human sense, has enabled spoken language for many of these children,” Young said. “But there is more variability in their language development compared to children without hearing loss. The long-term goal of our research is accurate prediction on the individual child level to identify at-risk children and provide them with the optimal intensity and type of therapy intervention.”

In the current study, Young and her colleagues compared the accuracy of traditional machine learning versus deep transfer learning algorithms — an advanced machine learning technique that uses knowledge learned from one task to improve the performance of another task — in predicting spoken language outcomes in children with cochlear implants with bilateral sensorineural hearing loss.

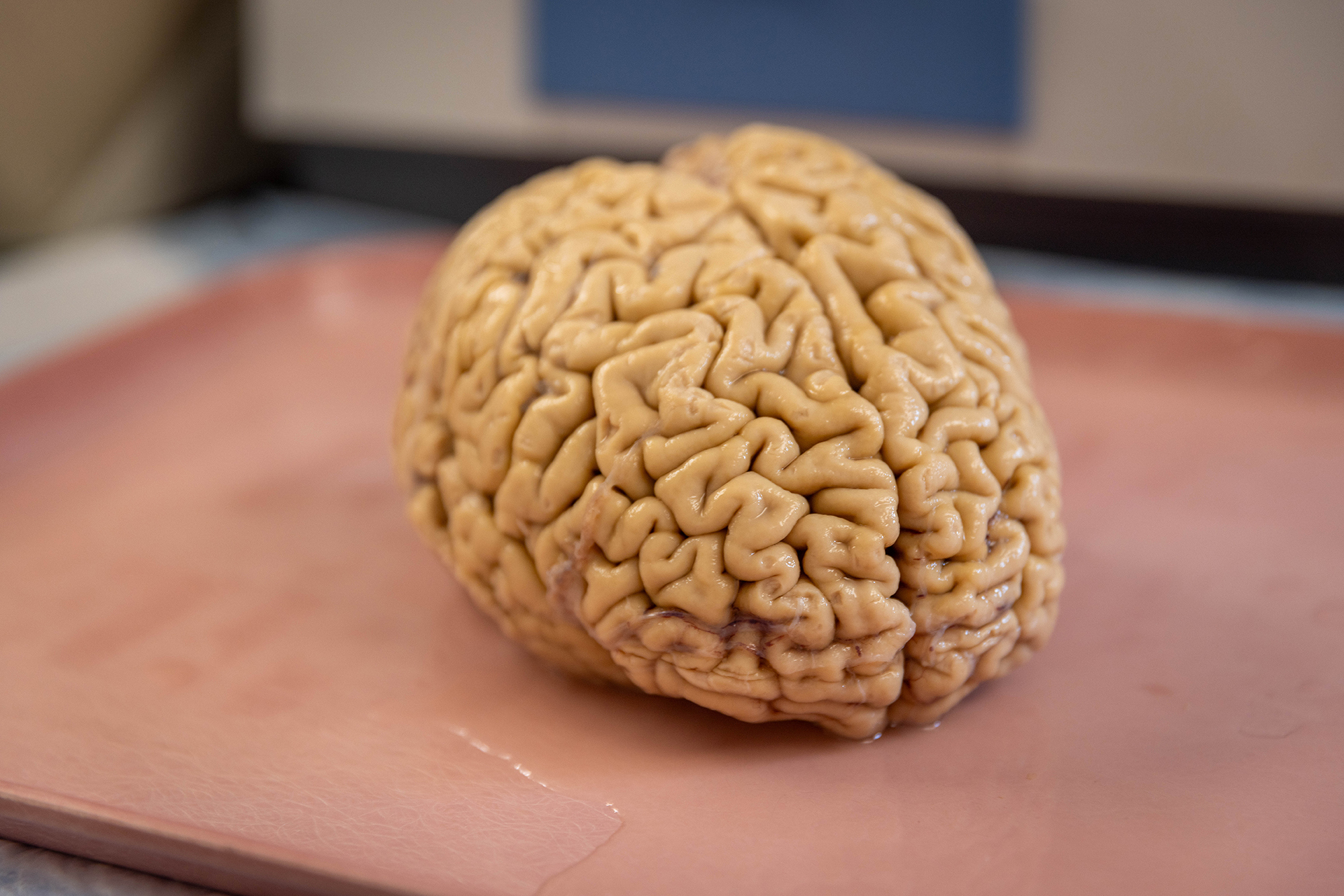

A total of 278 children with cochlear implants from English-, Spanish- and Cantonese-speaking families across three clinical centers in the U.S., Australia and Hong Kong were enrolled from July 2009 to March 2022. All children completed brain MRI scans before undergoing cochlear implant surgery.

Machine learning and deep transfer learning algorithms were trained to predict higher versus lower improvement in spoken language using information about brain structure from each child’s presurgical MRI brain scan.

Final data analyses revealed that deep transfer learning provided the most accurate prediction of spoken language improvement, both for children at each participating center and when data from different centers and children learning different languages was combined.

Notably, the deep transfer learning algorithm outperformed machine learning in predicting spoken language improvement by achieving 92.39 percent accuracy, 91.22 percent sensitivity and 93.56 specificity.

The findings highlight the feasibility of developing a single prediction model that can be used worldwide to provide accurate language prediction for children with cochlear implants despite differences in family language, imaging and outcome measure protocols used by medical centers.

In addition, knowing which children are at risk for less language improvement based on brain anatomy provides a framework to test different therapy approaches and determine which are more effective for different brain types, according to Young.

“What we want to do is to develop accurate predictions so we can figure out who’s at risk and then intervene to improve their language,” Young said. “There are also many kids with normal hearing who have language disorders and delays, and we believe that brain-based prediction will be applicable to them as, as well.”

This work was supported by the Research Grants Council of Hong Kong (grant GRF14605119) and National Institutes of Health grants R21DC016069 and R01DC019387.