Taking a few whiffs to determine if drinking from that carton of milk on the verge of spoiling is a wise choice depends on the brain’s ability to recall sensory experiences, according to new research published by Jay Gottfried, MD, PhD, associate professor in the Ken and Ruth Davee Department of Neurology.

The study offers insight into how the nervous system accumulates perceptual information to help humans make decisions. Well-documented in the animal visual system, this type of neurophysiological accumulation, published in the September 6 edition of Neuron, helps shed light on researchers’ poor understanding of how such mechanisms occur in the human brain.

“In an ideal world we get a sensory input, we process it very easily, and we act upon it,” Gottfried said. “But in many situations those sensory inputs, whether they’re smells, sounds, or visual stimulation are less constant, more ambiguous, and more unpredictable and the brain needs to come up with a way of devising its best estimate of what’s going on out there.”

The experiment, conducted by PhD in neuroscience candidate Nick Bowman, relied on participants smelling an odor and determining if it featured more clove or lemon scent. Researchers created mixtures that ranged from 100 percent of each element to seven different variations, one being a 50-50 breakdown. Researchers recorded the number of sniffs participants took for each scent while also evaluating brain stimulation using functional magnetic resonance imaging (fMRI).

Behaviorally, researchers found that for cases where one of the scents was predominant in the mixture, subjects needed just one sniff. With the more ambiguous or difficult mixtures, participants took multiple sniffs, suggesting that they were benefitting from taking more inhalations, accumulating information from a noisy, or uncertain, environment before making a decision.

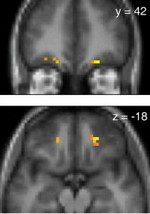

The use of fMRI highlighted an increase in brain activity in the orbitofrontal cortex (OFC) that peaked at the time a choice was made, verifying the OFC’s role in cognitive decision-making. Combined, the behavioral and fMRI data suggest a key role for the OFC in resolving sensory uncertainty, providing evidence for accumulation models of human perceptual decision-making.

“There is research to suggest that one mechanism that the brain can use to handle confusing information is to accumulate the information and integrate it over time,” Gottfried said. “As the event unfolds, you are taking bits and pieces of information and hopefully you can assemble enough of it to make the right decision. If the environment is noisy, it will take you longer to accumulate what you need to act.”

Although humans tend to solve perceptual tasks much faster than can be recorded by fMRI scanners, the group took advantage of the fact that human olfactory perception is relatively slow, particularly for mixtures of odorants.

“From a basic science point of view, this study is important because it really highlights for the first time that the human brain does integrate noisy information,” Gottfried said. “It’s been inferred from animal models but never shown in humans. It helps fill in a gap in our understanding and highlights the role of the OFC region as an integrator, where it is taking and absorbing information from many different channels in order to influence behavior most effectively.”

The extent to which the OFC is able to adjust the rate of information accumulation could provide a novel explanation for the disorders observed in patients with orbitofrontal pathology. For example, the high incidence of hallucinations, delusions, and disinhibition in schizophrenia could be due to a hasty or exaggerated accumulation process, such that noisy sensory inputs are too readily confirmed as perceptual facts. This would lead to distortions of perception and irrational choices poorly grounded in the available sensory evidence.

This research was funded with grants from the National Institutes of Health and the National Institute on Deafness and Other Communication Disorders.