‘We want to make fetal monitoring as easy as taking your temperature’

Northwestern Medicine and Google are collaborating on a project to bring fetal ultrasound to developing countries by combining AI (artificial intelligence), low-cost hand-held ultrasound devices and a smartphone.

The project will develop algorithms enabling AI to read ultrasound images from these devices taken by lightly trained community health workers and even pregnant people at home, with the aim of assessing the wellness of both the birthing parent and baby.

[pullquote]

300,000

The number of maternal deaths each year, most of which occur in lower- and middle-income countries[/pullquote]

“We want to make high-quality fetal ultrasound as easy as taking your temperature,” said Mozziyar Etemadi, MD, PhD, assistant professor of Anesthesiology and leader of the project at Northwestern. He is also a Northwestern Medicine physician and medical director of advanced technologies for the health system.

The World Health Organization recommends an ultrasound assessment before 24 weeks of pregnancy. This helps assess the health of the birthing parent and baby, plan the course of pregnancy and monitor for early risks and complications. Patients around the globe die during childbirth in high numbers. An estimated 300,000 maternal deaths and 2.5 million perinatal deaths occur each year, with 94 percent occurring in lower- and middle-income countries.

Ultrasound technology is becoming more portable and more affordable. Yet, up to half of all birthing parents in developing countries are not screened while pregnant.

That’s because existing hand-held devices require a trained technician to precisely manipulate the ultrasound probe to capture the right images. Then, the image has to be interpreted by a radiologist or specially trained obstetrician. Trained technicians and physicians are in limited supply in many underserved communities and developing countries. That is where AI comes in.

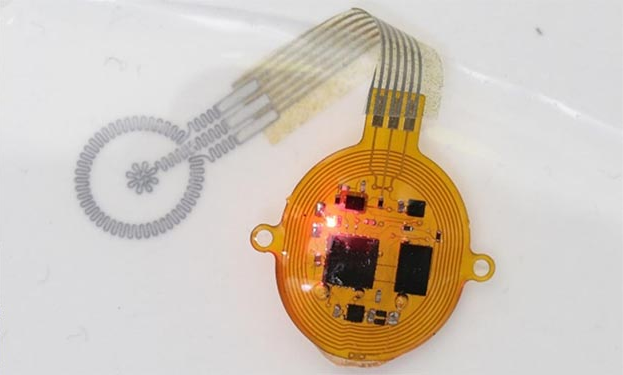

“The new AI algorithm will be able to use multiple imperfect ultrasound images taken with inexpensive, handheld ultrasound devices and interpret them as if they are perfect images,” Etemadi said. Raw ultrasound images will be sent to a smartphone, where AI will distinguish critical features like fetal age and position.

The low-cost device will take the image, send it to the smartphone and then the AI will provide a read on factors like fetal age and position.

“Training a new AI requires a lot of data, and in this case, we don’t routinely collect amateur ultrasound images, so the data doesn’t exist anywhere,” Etemadi said. “We have to first create this database of amateur ultrasounds with these wireless probes.”

Then Google Health can develop an AI that will do the fetal interpretation.

In the first step to developing the algorithms, Etemadi and his team will conduct research with pregnant patients from Northwestern Medicine in which they will perform their own ultrasound with a low-cost hand-held device. Northwestern technicians also will perform fetal ultrasounds on patients, and even family members will participate. The patients will then have a regular clinical fetal ultrasound. All the images and other pregnancy-related data will be downloaded into a database.

Study participants will use handheld ultrasound devices that have been pre-installed with Google Health’s custom application to collect, process and deliver the fetal ultrasound “blind sweeps.” “Blind-sweep” ultrasounds consist of six freehand ultrasound sweeps across the abdomen to generate a computer image.

The goal is to collect a broad set of data and related information including reports on fetal-growth restriction, placental location, gestational age and other relevant conditions and risk factors. Data will be gathered across all three trimesters and from a diverse representative group of patients. The study will collect ultrasound images from several thousand patients over the next year. All patients will consent to inclusion.

The AI will receive professional and amateur images across the many conditions that physicians typically want to monitor such as the age of the fetus and whether it has a heart defect. By having the side-by-side image captures, the AI can adapt to interpret the amateur image capture and learn to interpret them more accurately.

“In the developing world, people are far away from health care, sometimes multiple days by foot,” Etemadi said. “People don’t get health care, or when they go, it’s way too late.”

“The real power of this AI tool will be to allow for earlier triaging of care, so a lightly trained community health provider can conduct scans of birthing parents. The patients don’t have to go to the city to get it. The AI will help inform what to do next – if the patient is OK or they need to go to a higher level of care. We really believe this will save the lives of a lot of birthing parents and babies.”